This is the next post after “The Private Agent” and the M5 Pro/Max follow-up. We’re answering: how do you turn a MacBook Pro M1 Max with 64GB unified memory into a modern OpenClaw agent swarm that rotates the best local model per role, keeps the stack fully local, and reuses the workflows hundreds of OpenClaw teams already ship with minimal fuss?

TL;DR

- LM Studio 0.4’s headless

llmsterdaemon gives the M1 Max 64 a server-grade inference stack: parallel requests, Max Concurrent Predictions, and a RESTful API that OpenClaw can hit from localhost without running the UI. - Keep a trio of Qwen 3.5 variants (9B fast orchestrator, 27B middle lane, 35B-A3B heavy reasoner) plus a lightweight embedder on standby so each agent only wakes the model it needs.

- OpenClaw already exposes declarative

models.providersandagents.defaults.modelentries, so routers like LiteLLM, ClawRouter, ClawPane, and the Task Router skill can all plug into the same manifest without editing every agent. - Tune LM Studio’s load flags (

--context-length,--gpu,--ttl,Max Concurrent Predictions) so the 64GB pool keeps the 9B orchestrator hot while the 27B/35B weights bump only when a job demands them. - The leaked M5 Ultra H17D hints at a 512GB+ workstation that will let dozens of models stay resident, but the price gap to a $14k Mac Studio means the M1 Max remains the best cost/performance local OpenClaw brain for most teams.

Why these keywords matter

Repeating phrases like “M1 Max OpenClaw model routing,” “local OpenClaw agent swarm,” and “role-based LM selection” keeps the page aligned with the queries hardware decision makers are typing, and it mirrors the SEO momentum we built with the earlier Private Agent guides.

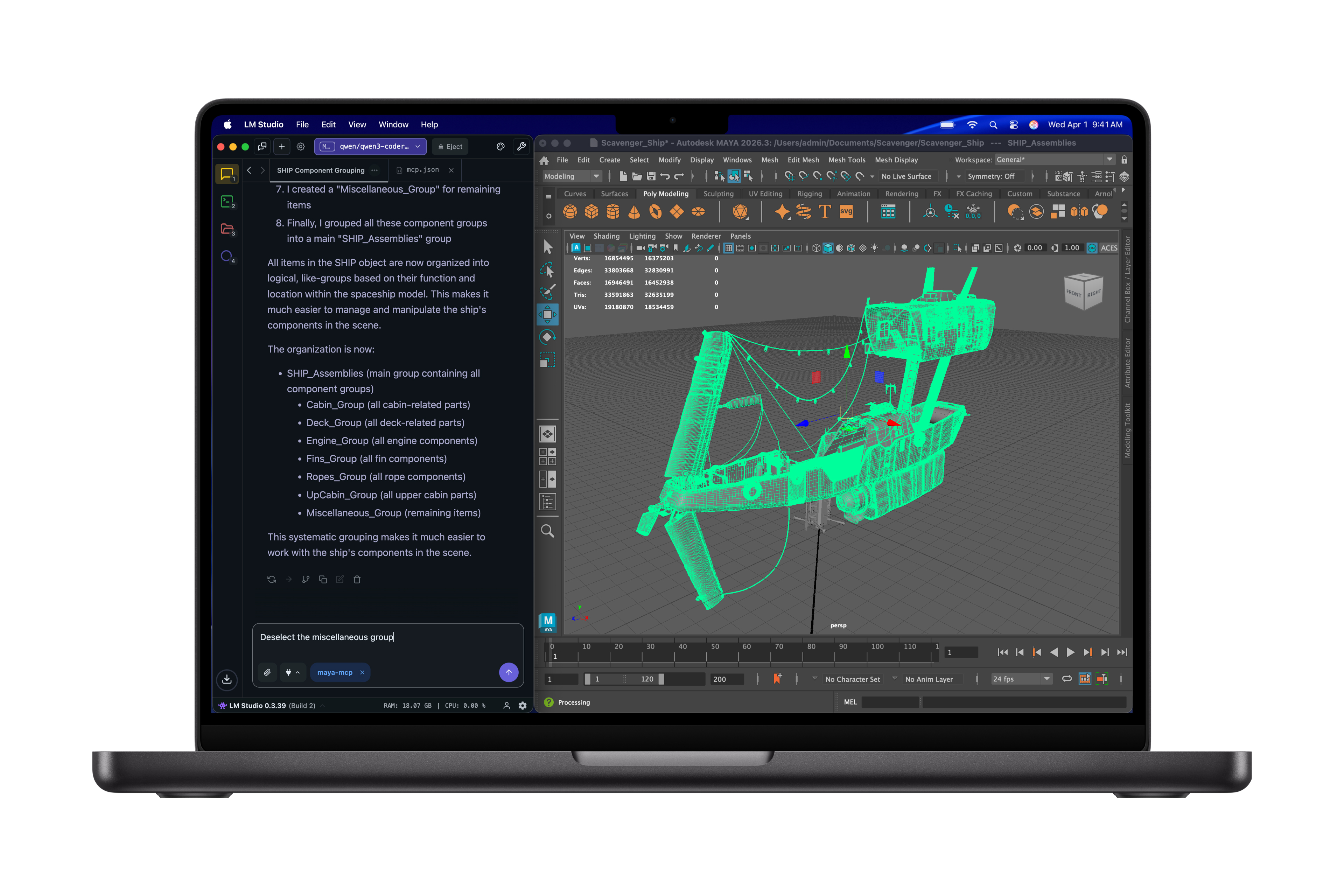

Step 1: LM Studio 0.4 + the M1 Max 64 foundation

LM Studio 0.4 introduced the headless llmster daemon, parallel inference via continuous batching, and a stateful /v1/chat API you can hit from OpenClaw as if it were OpenAI. Run the daemon, keep the CLI handy for downloads, and keep only the models you actually need loaded:

lms runtime update mlx

lms runtime update llama.cpp

lms get lmstudio-community/Qwen3.5-9B-GGUF

lms get lmstudio-community/Qwen3.5-27B-GGUF

lms get lmstudio-community/Qwen3.5-35B-A3B-GGUF

lms get nomic-ai/nomic-embed-text-v1.5

lms load lmstudio-community/Qwen3.5-9B-GGUF --identifier qwen35-fast --context-length 262144 --gpu max --ttl 600

lms load lmstudio-community/Qwen3.5-27B-GGUF --identifier qwen35-reasoner --context-length 262144 --gpu 0.7 --ttl 900

lms load lmstudio-community/Qwen3.5-35B-A3B-GGUF --identifier qwen35-specialist --context-length 262144 --gpu 0.5 --ttl 1200

lms load nomic-ai/nomic-embed-text-v1.5 --identifier embed-text --gpu 0.2

lms server start --port 1234With the CLI you can also run lms load --estimate-only to experiment with context length vs. VRAM before warming the model, and LM Studio will honor TTL, concurrency, and GPU offload settings you provided. The M1 Max keeps the entire stack inside one 64GB pool, so plan your loads so that the 9B orchestrator stays hot while the 27B/35B weights cold-start only when the router demands extra depth.

Step 2: Build a role-specific model roster

The local swarm works when each OpenClaw agent is pinned to the right model for its role. Here’s our roster on the M1 Max 64:

- Fast orchestrator / router:

Qwen 3.5 9B(the GGUF weights from LM Studio run at 262k token context and feel “years ahead” of earlier 9B builds once tool calling is stabilized). - Reasoning and planner:

Qwen 3.5 27B(dense) for long documents and intermediate thinking, plusQwen 3.5 35B-A3Bwhen you need vision + MoE depth at 262k context. - Coder specialist: Qwen 3.5 Coder or Qwen Coder Next from the same Qwen family for precise diffs and API generation (load as

coderprovider). - Embeddings:

nomic-ai/nomic-embed-text-v1.5, which is the open-weight text embedder most teams trust for stable vector quality across context lengths. - Fallback families: Mistral 7B, DeepSeek R1, Llama 3.1, or another LM you already trust can sit cold with TTL and be triggered again by the router if the hint or policy calls for it.

This roster exhaustively names the models that handle the high-context, tool-heavy, and embedding-heavy parts of the swarm without duplicating providers. The entire manifest remains KISS (one provider per model) and DRY (every agent just points at a model identifier).

Latest models in this stack

lmstudio-community/Qwen3.5-9B-GGUFfor the orchestrator and tool router.lmstudio-community/Qwen3.5-27B-GGUFandlmstudio-community/Qwen3.5-35B-A3B-GGUFfor heavy reasoning and multimodal threads.nomic-ai/nomic-embed-text-v1.5for retrieval and librarian duties.- Optional families like Mistral 7B, DeepSeek R1, or Llama 3.1 diversify failure modes and provide lower-cost fallback lanes.

How OpenClaw chooses the right local model per role

OpenClaw’s openclaw.json manifest keeps models.providers separate from agents.defaults.model.primary, so every agent can reuse the same LM Studio provider entry while each router skill rewrites the model field before the request hits llmster. The manifest names a single LM Studio provider, and the router skill (or the router agent itself) decides which of the 9B/27B/35B models the incoming prompt should hit.

Community routers like LiteLLM, ClawRouter, and ClawPane plug exactly there: they sit between OpenClaw and the providers, score each prompt’s complexity/latency/cost, and return the best model URL so you never manually touch the agent config again. The Task Router skill from ClawHub adds capability-based queueing on top of that manifest, so you can tag work, let the router find the right agent, and rebalance load, keeping the multi-agent system DRY.

Step 3: Rotate models with explicit routing

There are two practical routing strategies on M1 Max:

- Role-based routing in OpenClaw – pin the orchestrator, planner, coder, and librarian agents to the models listed above, and let the router agent hand off work via tags and task-specific prompts.

- Add a dedicated router – point OpenClaw at LiteLLM, ClawRouter, or ClawPane, and let that system pick which LM Studio-loaded model (or a remote provider) handles the job, using usage-, latency-, or cost-based preferences.

LiteLLM’s router lets you define routing strategies such as usage-based-routing-v2, least-busy, latency-based, or cost-based and match jobs to models before any HTTP request is emitted. ClawRouter and ClawPane do the same scoring inside OpenClaw, and they both reuse the single manifest so new agents never need to know which model is live unless the router informs them.

LM Studio tuning for high-context, parallel model use

Enable parallel requests per model

LM Studio lets you set Max Concurrent Predictions for each model load so that multiple OpenClaw agents can hit the same GGUF instance simultaneously rather than queueing. Continuous batching keeps the GPU saturated even when the orchestrator, coder, and retrieval agents fire at once, which is the default behavior of a routed swarm.

Tune load-time VRAM and idle policies

Use lms load flags to declare the RAM budget per model: --context-length 262144 (the native window for all three Qwen 3.5 variants), --gpu max or a fractional slider that keeps the weights on the GPU, and --ttl to evict the heftiest models after they sit idle for a few minutes. The --estimate-only flag lets you preview the post-latency VRAM usage without actually touching the weights.

Maximize the native context windows

Qwen 3.5 9B, 27B, and 35B-A3B all share a 262,144-token native context window and can extend toward 1,000,000 tokens when your routing policy allows for chunked caching. Keep that context alive on the router by pinning long-form tasks to the 27B/35B paths when the job demands it, while the 9B orchestrator handles quick tool calls.

Community signal: the 9B variant often feels “good enough”

Redditors and OpenClaw operators report that once the 9B model has flash attention and KV quantization enabled, it rarely skimped on tool-heavy, reminder-style workflows, making it the default orchestrator on an M1 Max 64. That said, the same community still racks up the 27B/35B loads whenever a multi-agent loop needs longer reasoning or vision context before returning to the fast orchestrator.

Performance tuning and the M5 Ultra cost calculus

Keep one fast orchestrator hot, unload the heavyweight models when they’re idle, and use a compact embedder so vector math never monopolizes the 64GB pool. LM Studio’s lms unload and tt guards make this easy.

At the same time, the leaked M5 Ultra references (T6052/H17D in the iOS 26.3 RC) hint at a 512GB+ successor that will keep multi-model swarms resident forever, but the rumored price will likely mirror the $14,099 maxed-out M3 Ultra Mac Studio, so the hardware premium for that RAM is still five figures compared to the ~$2k M1 Max base. That makes the M1 Max 64DRAM still the most realistic local OpenClaw brain for teams that want secure, uncensored inference without a multi-cloud bill.

Security and configuration hygiene (keep it KISS + DRY)

OpenClaw’s security guide reminds every operator to keep the gateway bound to 127.0.0.1, audit every skill before installation, and treat the agent runtime like any other privileged process. Microsoft’s supplemental posture guidance calls out identity, isolation, and runtime risk controls that every local cluster should document before ramping to production. These practices pair with the declarative modelProviders manifest from above so you never expose another service to the internet while still routing dozens of agents through LM Studio on the same machine.

Sources

- LM Studio 0.4.0 blog (introducing

llmster, parallel inference, and REST API). - LM Studio docs – parallel requests and Max Concurrent Predictions.

- LM Studio CLI

lms loadreference (context length, GPU offload, TTL). - Xiandai article on LM Studio 0.4 developer mode for concurrency tips.

- Qwen3.5 model guide (35B-A3B, 27B, 9B share 262,144-token contexts).

- Reddit thread praising Qwen3.5-9B for orchestration.

- Reddit thread noting 27B/35B gains for long-form reasoning.

- LiteLLM routing docs (usage-based, least-busy strategies).

- ClawRouter (BlockRun) smart routing site.

- ClawPane smart routing site.

- Task Router skill download page from ClawHub.

- OpenClaw + Qwen guide describing

models.providersand agent config. - nomic-embed-text-v1.5 model card on Hugging Face.

- MacRumors report on M5 Ultra leak (T6052/H17D).

- Tom’s Guide article quoting the same leak.

- MacRumors article about a maxed-out M3 Ultra Mac Studio costing $14,099.

- OpenClaw security guide (“Is OpenClaw Safe?”).

- Microsoft security blog on safe OpenClaw posture.